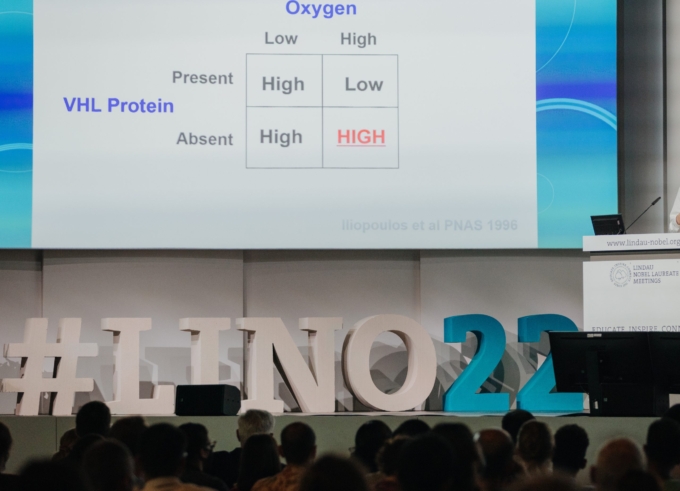

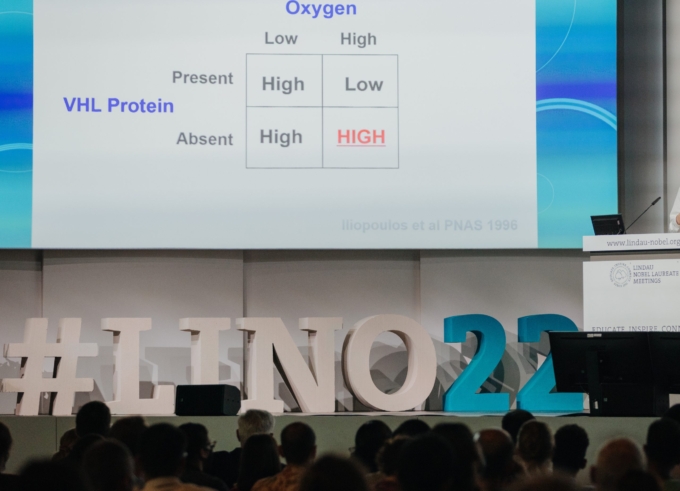

Daily Recap – Tuesday, 28 June 2022

The 71st Lindau Meeting is fully underway and also the third day of #LINO22 was packed with exiting sessions and opportunities for informal exchange.

Daily Recap – Monday, 27 June 2022

Today, #LINO22 offered plenty of options for lively exchange, fruitful discussions and fun social events.

Daily Recap – Sunday, 26 June 2022

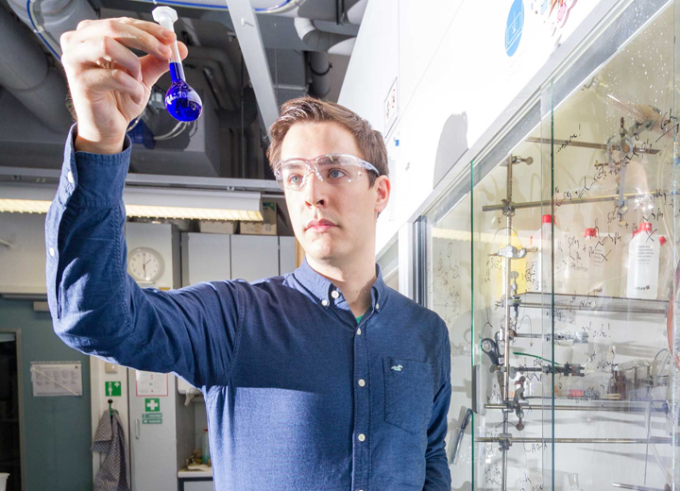

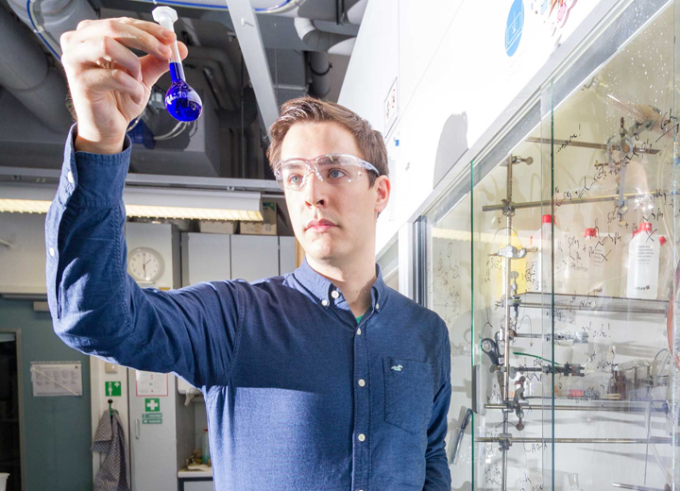

Opening day of the 71st Lindau Nobel Laureate Meeting: The opening ceremony featured greetings from multiple top-class guests. The academic programme was then kicked off by the Lecture of the 2021 Nobel Laureate Sir David W.C. MacMillan and a Panel Discussion about "Trust in Science, Trust in Chemistry".

Nobel Prize in Chemistry 2021: A Greener and More Efficient Way of Chemical Synthesis

Benjamin List and David MacMillan receive the 2021 Nobel Prize in Chemistry for the development of a powerful and sustainable method that has revolutionised the way to synthesise many important compounds.

The Many Facets of Biology: How the Study of Life Impacts Antibiotics, Animal Beauty and Drug Design

#LINO70 offered an overview about current research in Biology.

Young Scientists at #LINO70: Robert Mayer – Calculate the Chemical Mystery

Robert Mayer will participate in #LINO70. Last year he finnished his PhD project that helps to predict chemical reactions.

Young Scientists at #LINO70: Jayeeta Saha – A Green Pathway to Generate Hydrogen

Jayeeta Saha will participate in #LINO70. The main focus of her PhD project is to find a way to generate hydrogen with the lowest energy penalty as an alternative of fossil fuel.

Lindau Scientists’ Survey: Science From Home

Impact of Corona on the working processes in science, results from our COVID-19 Survey.

Lindau Scientists’ Survey: Shift in Research Focus During First Year of the Pandemic

Year one of the pandemic: Scientists explain how corona has changed their topic.

Nobel Prize in Chemistry: The Age of CRISPR

Jennifer A. Doudna and Emmanuel Charpentier have been awarded the 2020 Nobel Prize in Chemistry for the revolutionary CRISPR/Cas gene editing tool.

The Challenges of Science Communication

Lindau Alumna Arunima Roy about former and current challenges of science communication.

Filming the Most Fundamental Processes in Chemistry

With the help of an electron microscope, two of the most fundamental processes in chemistry – chemical bonding and crystal formation – have been caught on film for the first time.