Veröffentlicht 23. Mai 2018 von Melania Zauri

Reproducing Research Findings Comes at a Cost

The term ‘reproducibility crisis’ first appeared in a 2012 article which discussed the emerging problem of the lack of replicability of studies in the psychological sciences. The debate on the existence of such a reproducibility crisis and the question whether this applies only to some scientific fields is still ongoing. This clearly depends on both the nature of science and the methodology. If we take a step back and look at how the evidence for a scientific crisis is collected, we may find one of the issues that fosters the debate ad infinitum in the quantification of how widespread the phenomenon might be. A recent article suggests that if we measure the number of retractions or comments they do not seem to be booming extensively. This, as the author concludes, argues against the emergence of a reproducibility crisis and its influence on the scientific progress. Yet, the issue may simply not be completely verifiable. As Nobel Laureate Randy Schekman, who will participate in the panel discussion ‚Publish or Perish‘ during the 68th Lindau Meeting, pointed out in his lecture at the 2017 Sackler Colloquia, the problem is evident in the fact that the information on replication studies lies mainly hidden within industries or research groups. Indeed, recent surveys among scientists show that incentives to publish reproducibility studies or, even more so, negative findings are low, and those who try to publish such studies are facing obstacles, with an estimate of only 10% of these ending up in a published article.

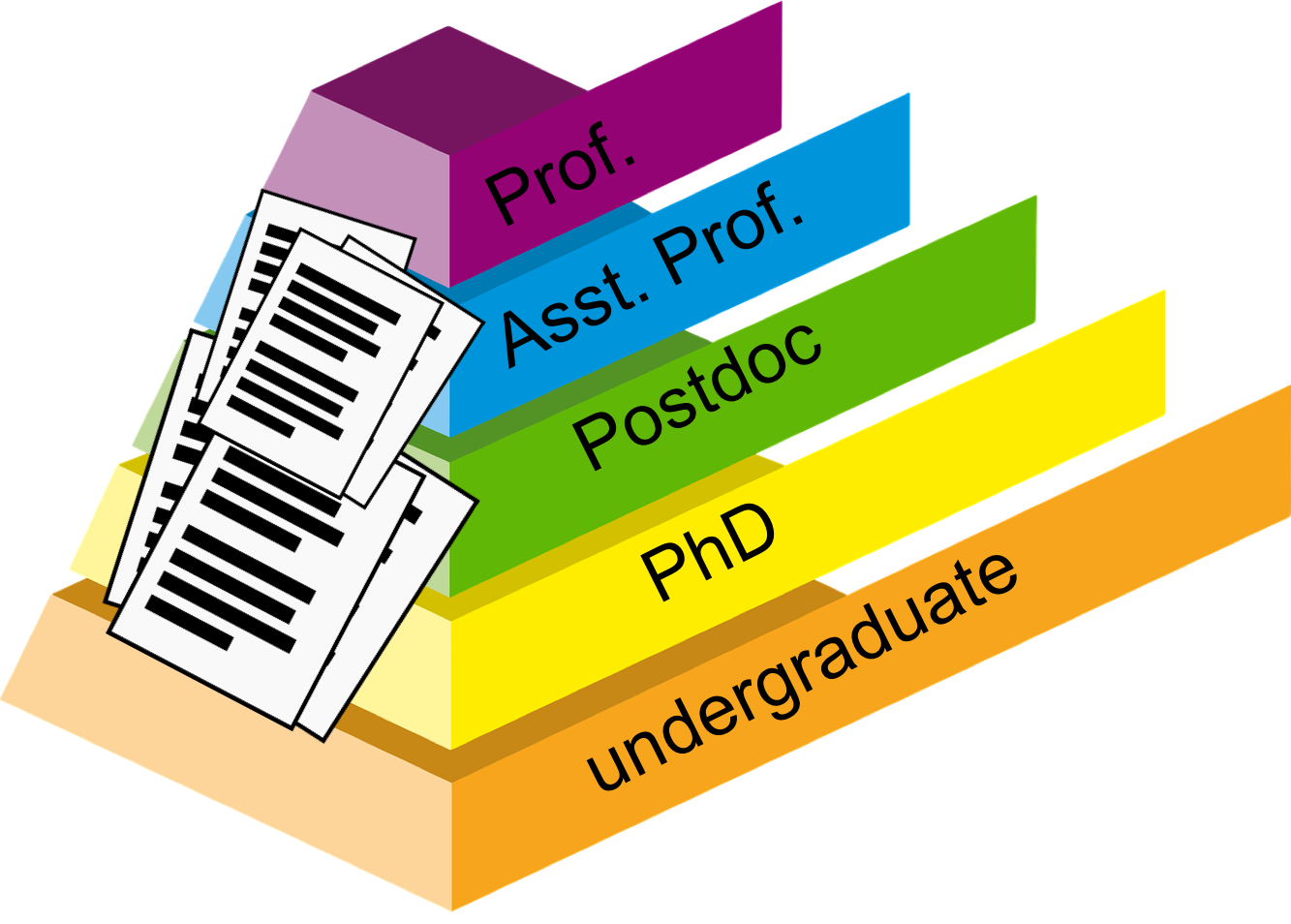

Scientific progress and research expenditure aside, we are looking at researchers who are rushing to get a tenured position somewhere. How much time will be spent on reproducibility studies? Indeed, some researchers suggest that reproducing previous findings is only worth the costs (in terms of time and money) in the case of very innovative ideas, and many more scientists estimate the time they spent trying to reproduce other researcher’s findings at around 30% of the total time they have available for research. If we consider 30% of a two-year fellowship, that amounts to 7.2 months – this significantly affects the career progress of a scientist. Public institutions and funding bodies are increasingly considering the time since a PhD degree has been awarded when giving independent grants to individual researchers, and they continuously reduce the accepted timeframe. Does this particularly affect people who fail to build up on previous knowledge?

Surveys suggest that the great majority of researchers can either not reproduce their own findings or the ones from others. Unfortunately, not much data is available on the numbers of scientific studies that are reproducible, and the lack of an open communication on the subject among scientists – essential dialogue promoting scientific growth – is sometimes identified as one of the causes. Only 20% of researchers who participated in a survey by Nature were contacted because someone could not reproduce their work; the problem may be attributed to conflict avoidance or research secrecy.

One of the recent efforts to try to quantify the extent of irreproducible science in biomedical research is the ‚Reproducibility Project: Cancer Biology‚ which attempts to reproduce 50 cancer studies that were selected based on their high impact. The project is a collaboration between Science Exchange and the Center for Open Science and funded by the Arnold foundation. The analysis is carried out by researchers at specialised facilities and will, after peer review, be published in the journal eLife. The early data released is not comforting: only two of five reports analysed were fully reproducible. This number could be a crucial factor contributing to the disadvantage of researchers who cannot publish their work due to a lack of novelty, because they compete with those who have published high impact, yet often not reproducible, studies. If funding bodies took the time spent on reproducing findings into account, this could significantly improve the career selection system. Time spent on reproducibility studies should be included in the debate on the evaluation of scientists.

Indeed, in the whole scientific endeavour, progress, as Newton famously put it, comes with the ability to see further by standing on the shoulders of giants. No matter if the giants are big research groups, highly cited papers or any previous finding, we have to make sure that we can still safely stand on the shoulders of the large majority, if not all, of the published literature.