Nobel Prize in Chemistry 2023: Miniscule Colour-Emitting Dots

Tiny but mighty: Quantum dots are being used to improve and refine the colours that we can see in television sets and in lighting. The Nobel Prize in Chemistry 2023 is awarded to Moungi G. Bawendi, Louis E. Brus and Aleksey Yekimov for the discovery and development of these particles.

Sveriges Riksbank Prize in Economic Sciences 2023: Uncovering the Key Drivers Behind Gender Differences in Pay and Employment

Claudia Goldin will receive the Economics Prize for her pioneering research that explained why gender differences in earnings and employment rates had changed over time.

Nobel Prize in Physics 2023: From Nought to Three Nobels in a Billion Billion Attoseconds

Pierre Agostini, Ferenc Krausz and Anne L’Huillier will receive the 2023 Nobel Prize in Physics for their contribution to attosecond physics.

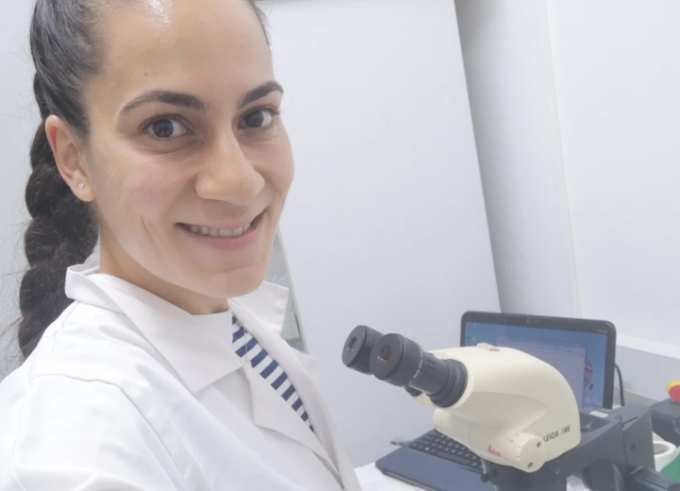

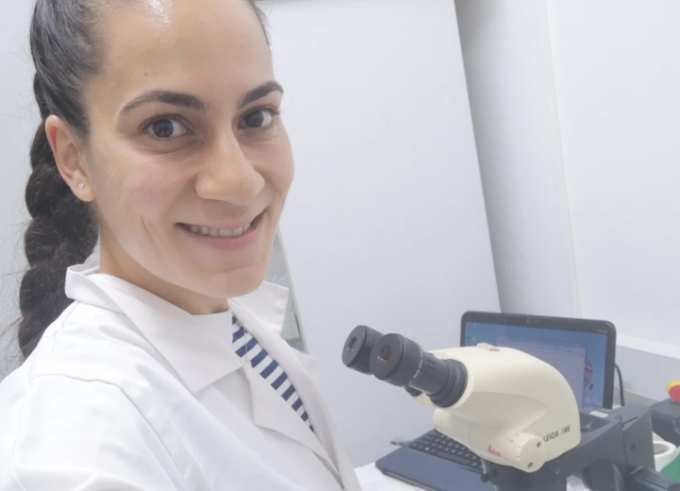

Women in Research #LINO23: Chryso Th. Pallari

Lindau Alumna Chryso Th. Pallari is conducting research in the fields of epidemiology, infectious diseases, and public health.

#LINO23 Alumna Shatarupa Bhattacharya: Enthusiasm and Motivation Matter

Shatarupa Bhattacharya focuses on the genetic disorder thalassemia as well as on blood-borne infectious diseases such as malaria and toxoplasmosis. She shared her #LINO23 experiences and reviewed her career as a female scientist from India.

Integrating Artificial Intelligence into Healthcare: Ethical Frontiers

Lindau Alumnus Polat Goktas participated in the Physics Meeting 2016. Learn more about his experiences in Lindau and his professional odyssey, taking him from Ankara to the corridors of Harvard Medical School, and eventually leading him to the historic beauty of Dublin, focusing on various fields of artificial intelligence.

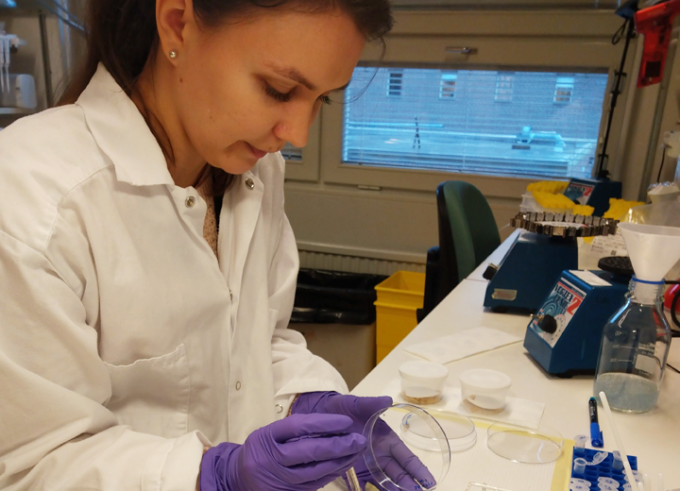

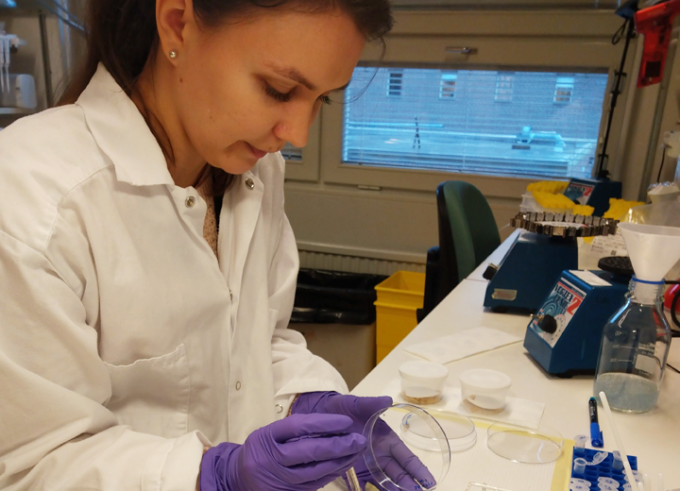

Women in Research #LINO23: Greta Zaborskyte

Greta studies how the critically important bacterial pathogen, Klebsiella pneumoniae, evolves inside human hosts during colonisation and infection and explores it can improve its biofilm formation.

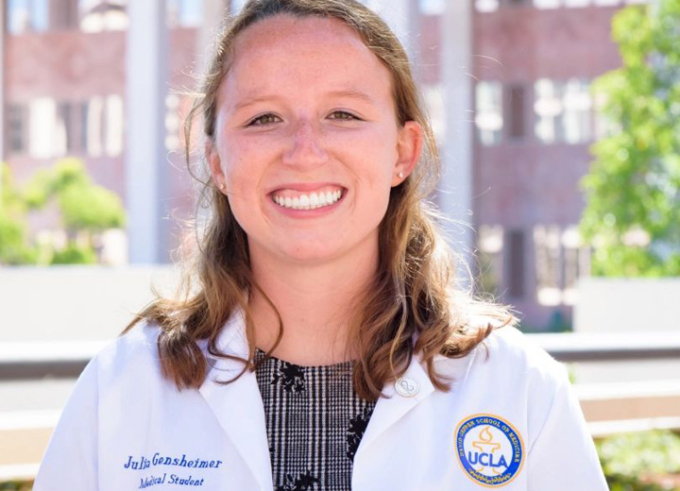

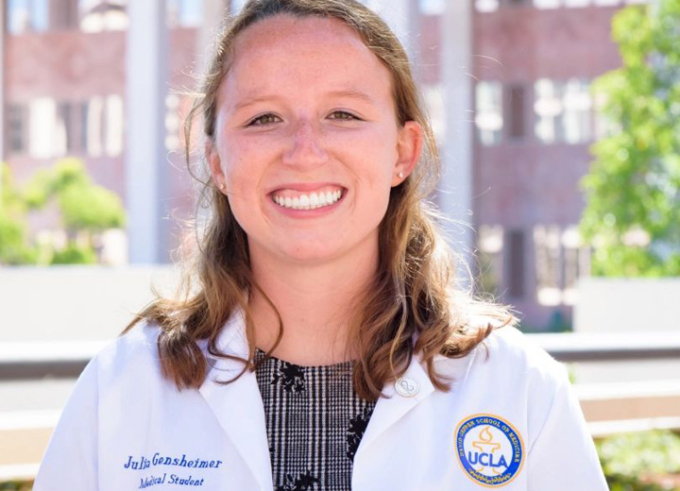

Women in Research #LINO23: Julia Gensheimer

Julia Gensheimer studies T cell development from hematopoietic stem and progenitor cells at UCLA. She hopes the findings will influence regenerative medicine and therapies for age-related diseases.

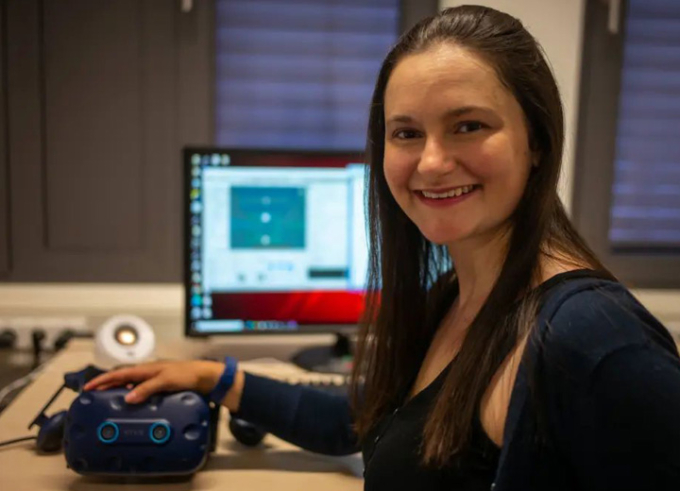

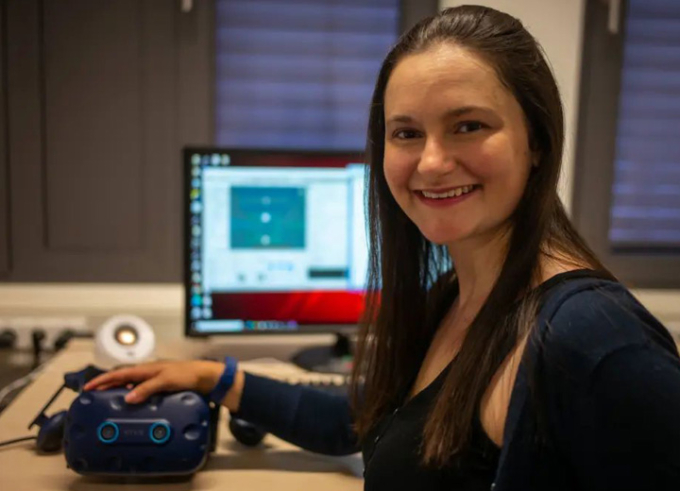

Women in Research #LINO23: Avi Aizenman

Our eyes are constantly moving, allowing us to process the world around us. Avi studies the eye movements we make as we move around our natural world and in environments like virtual reality. She is one of the "Women in Research" portrayed in the blog series by Ulrike Böhm.

Women in Research #LINO23: Sandra Wagner

Sandra Wagner, postdoctoral researcher and deputy group leader at University of Tübingen, Germany concerns nearsightedness, termed myopia,

Addressing Climate Change at the Nexus of Technology, Business, and Policy

Climate Change forces humanity to hurry to find solutions to make an impact at scale. Xiangkun (Elvis) Cao, Schmidt Science Fellow at MIT, Activate Fellow, and 2022 Lindau Alumnus, works in the field of carbon management as a component to address climate change.

#LINO23: Immense Inspiration and a Change of Perspective

Five Lindau Alumna 2023 who were interviewed for the blog series "Women in Research" reviewed their experiences in Lindau.