BLOG - Research

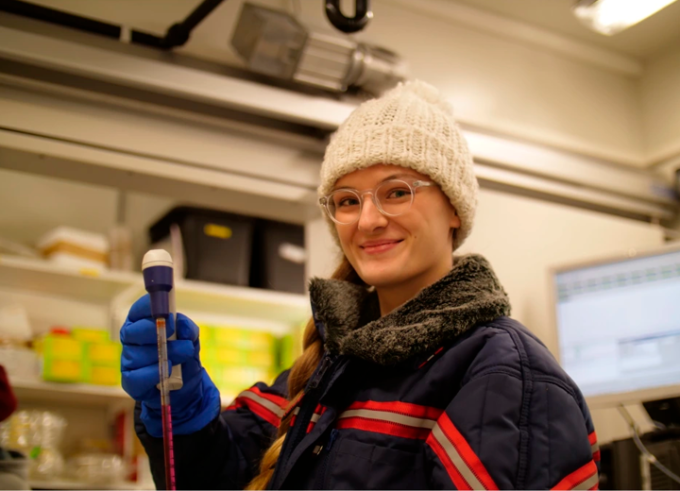

Women in Research #LINO23: Magdalena Migalska

Magdalena from Poland is a Postdoc at Jagiellonian University, Krakow, Poland. She studies the evolution of the vertebrate immune system, focusing on the major histocompatibility complex (MHC) – a set of molecules central to molecular self/non-self recognition and the adaptive immune response.

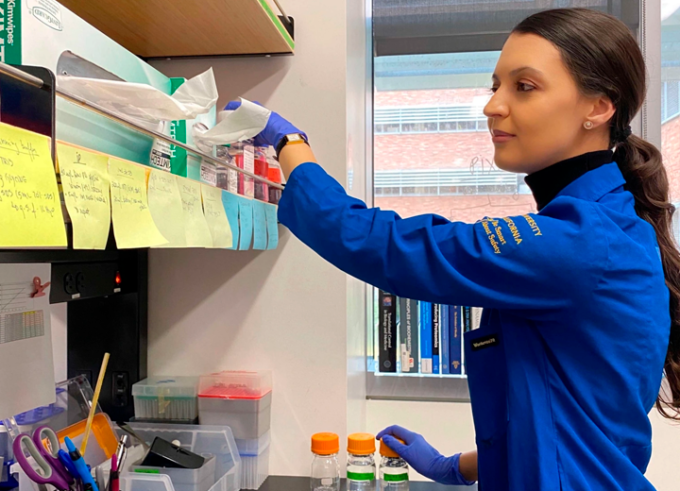

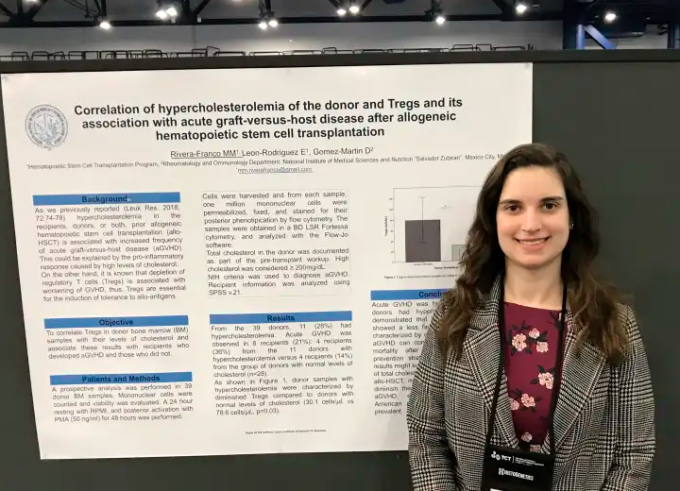

Women in Research #LINO23: Mónica Rivera-Franco

Mónica from Mexico is a Medical Researcher at Eurocord Paris, France. Her research focuses on epidemiological and translational research in the fields of human leukocyte antigens, cord blood transplantation, and cellular therapies for benign and malignant hematological diseases.

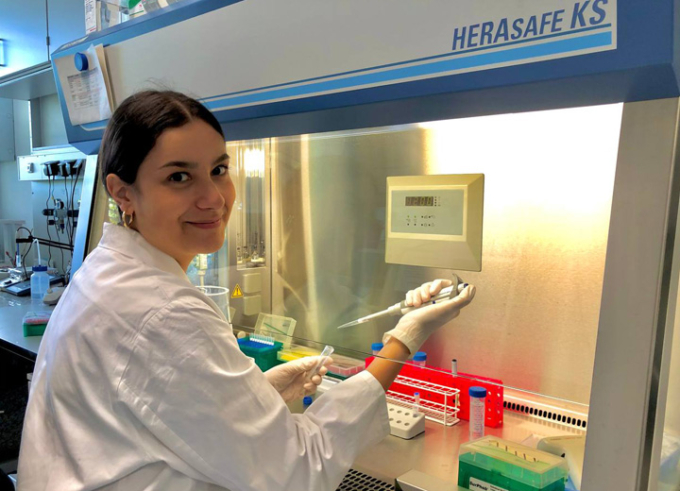

Women in Research #LINO23: Zuzanna Kozicka

Zuzanna from Poland is a Ph.D. graduate at the Friedrich Miescher Institute in Biomedical Research, Basel, Switzerland. Her research focuses on molecular glue degraders, a new paradigm in drug discovery that allows targeted destruction of disease-causing proteins.

Women in Research #LINO23: Mari Carmen Romero-Mulero

Mari Carmen from Spain is a Ph.D. student at the Max Planck Institute of Immunobiology and Epigenetics and the Faculty of Biology, University of Freiburg, Germany. Her project focuses on the assessment of the effect of aging on the physiology of the hematopoietic compartment.

Women in Research #LINO23: Yanira Méndez Gómez

Yanira from Cuba is a Marie Skłodowska-Curie Postdoctoral Fellow at the University of Cambridge, United Kingdom. Yanira’s research, which she conducts at Prof. Dr. Gonςalo Bernardes’ group, focuses on the discovery of new multicomponent reactions and their application on protein modification.

Women in Research #LINO23: Flávia Sousa

As a Senior Researcher at the Adolphe Merkle Institute, University of Fribourg, Switzerland, Flávia Sousa's research work has been pioneering regarding the encapsulation of anti-angiogenic monoclonal antibodies and understanding the efficacy in treating glioblastoma by normalizing the tumor vasculature and microenvironment.